Deep Learning is a subject of interests of numbers of researchers and engineers, but it requires a decent GPU in order to make experimentation feasible. Otherwise the time required to obtain results becomes a showstopper as computations may take weeks.

In order to solve this issue we’ve built a machine which can deliver results fast, even for the most challenging tasks. Meet Balrog – Tooploox’s Deep Learning Box.

Deep learning GPUs

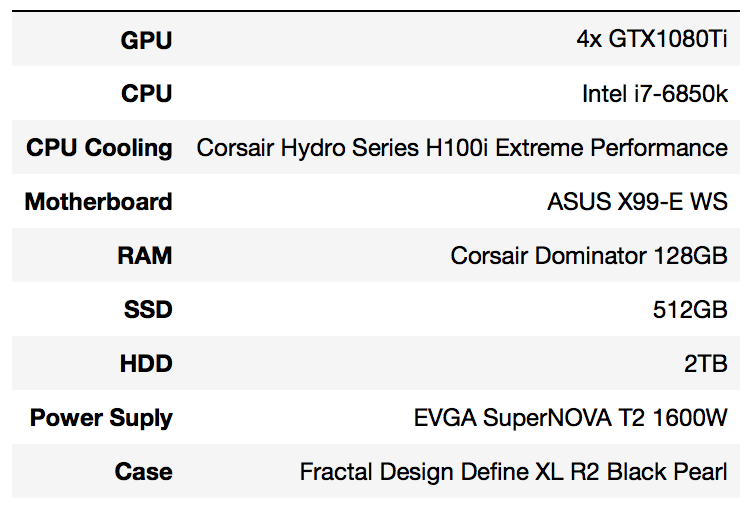

The hardest part was to choose the right components, especially GPUs. At first we were considering Nvidia Titan X, however high prices, and limited availability (you can buy Titan X only directly from Nvidia and no more than 2 cards per customer) forced us to look for alternatives. Luckily Nvidia introduced GTX 1080Ti that offers great performance for the reasonable price and available at a numbers of resellers. Also, for a price of two Titan X’s, we got four GTX 1080Ti’s. Here is the full setup:

Assembling the system with the mentioned list of components, cost us roughly 8000 $ in May 2017.

Computer motherboard for deep learning

An interesting choice in our setup is the motherboard. At first we were considering ASUS Rampage V Edition 10 but after mounting 4 GPUs, the system became unstable. The support from ASUS also struggled with this issue and recommend to wait for the BIOS update. We didn’t have that much time so we decided to pick a different motherboard.

“To SLI or not to SLI” in deep learning GPU?

The answer is not to SLI. At least for our needs, as we prefer addressing direct execution of code over GPU. It’s also the case for most of deep learning applications. However, if you consider gaming, then SLI would be the right way to go.C

Chosing system for deep learning

We have decided to use Ubuntu 16.04 because it’s well documented and has a proof record of successful machine learning projects.

The number of machine learning libraries is increasing faster than one can install them. Therefore, instead of polluting the operating system, we went for Nvidia-docker. It allows running dockerized frameworks, such as Caffe, PyTorch or Tensorflow and creating images for many others.

Benchmarks of our deep learning computer

We are not particularly interested in benchmarking GPU, however, we verified that configuration similar to ours (also 4x GTX 1080Ti) has obtained the leading score in each of 3D Mark FireStrike benchmarks.

We also run TensorFlow benchmarks, following methodology described in the linked page. Without going too much into details, this benchmark evaluates image processing speed by different state-of-art deep learning models for image classification. For the purpose of this post, we focused only on the synthetic data.

Firstly we defined our environment in the following way.

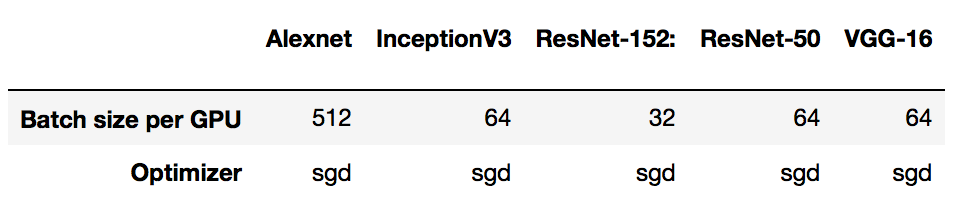

For each model, we have used the following settings of batch size and optimizer:

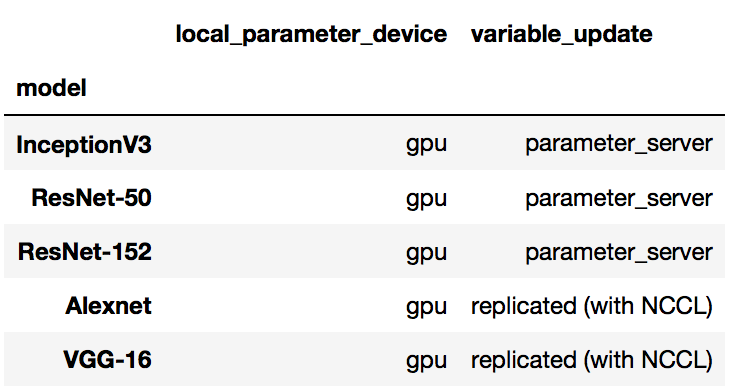

Finally, we have defined the benchmark parameters as follows:

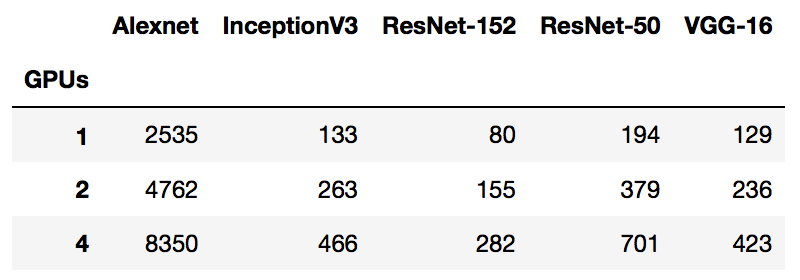

Below you may find a table describing numbers of images processed by each model, depending on the number of gpus:

We were also interested in how much increasing the number of GPUs would affect the total processing speed. Ideally this dependency should be linear, however, the following chart shows, that it’s not necessarily true.

Summary

In the first paragraph, we mentioned Balrog – the name of the demonic creature from “The Lord Of The Rings”. Considering the computational power, which is offered by our computer, we find it appropriate to call it after this beast. It also follows the convention of naming things in Tooploox… well Balrog is actually located in a room called Moria.

We also recommend a great post of Tim Dettmers, that is kept up to date and should be read by everyone who aims in building similar machine.