Building exciting PoCs for internal purposes

In the previous blog post about Natural Language Processing, Dominika Basaj shared her insights about the robustness of the neural language models. However, at Tooploox, apart from carrying out research and delivering working solutions to the clients, we also build internal tools to develop our skills and engage in the creative process of expanding our expertise. In this spirit, we created a simple, NLP-based proof of concept to show what kind of information we can retrieve from a noisy, everyday-language speech coming from videos.

Imagine a situation, when a friend recommends you a cool video, without providing further details about its content.

As it sometimes happens, you are currently extremely busy with some other task, but you really want to get an overview of the recommendation just to see if it fits your interests at all. Here, our tool comes with a helping hand. Its main aim is to retrieve the textual content from YouTube videos. It makes use of standard unsupervised NLP techniques to extract the most meaningful information from the text. Sounds pretty straight-forward, but how do we really get there?

Can you describe this video in a few words?

First of all, we need to emphasize that language in videos is very diverse and it should be approached rather carefully. Not only does it depend on the context (e.g. you speak differently about your day in a daily vlog, and differently while referring to a difficult educational concept), but it may also involve interjections – expressions that usually show spontaneous reactions or mark hesitation or a break (like “uhmm”, “yeah”, “okay”, “hm”). These phrases, commonly used in the spoken language, may interfere with the keyword extraction process, particularly when it is based on the existing language corpora created from a written text. To tackle this problem, we collected a custom dataset of video-based text representations from various categories and created a n-gram language model that incorporates spoken language characteristics.

One of the features offered by our tool is keyword extraction. It employs one of the most popular methods crafted for this task, namely TF-IDF. TF-IDF aims to show the relative importance of a word with respect to the specified language corpus. It consists of two blocks – (a) term frequency, which measures how many times a term (be it a word or a phrase) appears in a document, and (b) inverse document frequency that assesses the popularity of the word across all documents found in a corpus. This way, when a word occurs frequently in one document (video), but rarely across the entire corpus, it is assigned a larger weight in comparison to words that occur commonly both in a document and in the corpus.

Can you describe this video in your own words?

Apart from the keyword extraction, we dedicated some time to explore the field of automatic text summarization. We considered both approaches to the problem – abstractive and extractive summarization. In the extractive summarization, the goal is to pick the most relevant sentences that contribute to the meaningful summary generation. On the other hand, in the abstractive summarization, the model aims to deliver new sentences grasping the relevant content of the text.

Due to recent advances in the NLP field, there have appeared new approaches to the abstractive summarization that use a seq2seq model architecture. Notably, some of them come with released pre-trained models and GitHub implementations (detailed information about papers and implementations can be found under this link).

For our experiments, we chose the implementation of Chen and Bansal’s paper “Fast Abstractive Summarization with Reinforce-Selected Sentence Rewriting”, as we found it the simplest to integrate with other components of our project. The model was trained on the CNN/DM dataset with news and its summaries. It consists of two main components: an extractor, which selects the most important sentences and an abstractor, which re-writes them. Due to the lack of data, we didn’t have a chance to fine-tune the model. Despite the promising results obtained on the CNN/DM test set, we weren’t able to obtain meaningful results for our purpose. We didn’t expect miracles, but you can see some of its ridiculous mistakes below.

Let’s Run The Abstractive Summarization Model On The Video About The Immune System

billions of bacteria, viruses, and fungi are trying to make you their home .

it has 21 different cells and 2 protein forces these cells .

bodies have developed a super complex little army with guards, soldiers, weapons factories, .

this video, let us assume the immune system has 12 different jobs .

us assign them .

What About A Video About The Black Holes?

what happens if you fall into one? stars are incredibly massive collections .

in their core, nuclear fusion crushes hydrogen atoms into helium releasing a tremendous amount of energy .

there is fusion in the core, a star remains stable enough .

where do they come from .

As you can see, this particular model does not excel at the provided data. Even though the sentences make sense at first glance, in a way that you could probably guess the global topic of the video, they are not grammatically coherent and they certainly are far from perfect.

Okay, but what is it really about?

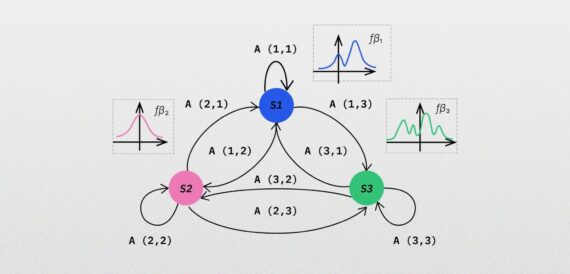

Under these circumstances, we decided to choose an extractive summarization algorithm – TextRank. As its name suggests, it is an extension of the PageRank algorithm created for ranking web pages. As PageRank creates a transition probability matrix for web pages, TextRank treats sentences as nodes and assigns higher weights to similar sentences. Despite its simplicity, this method proved to meet our expectations and highlight the parts of the text that indeed conveyed important messages.

Let’s take a look at the video about the immune system to see how the tool performs!

We are happy to share with you a description of a tool that shows how popular NLP methods can be tailored and applied to a novel problem. With relatively small efforts and constrained timeline (from zero to hero in just one month!), we managed to create a working solution and learn a lot! The current state of the project also serves as a great starting point for implementing more sophisticated features, such as the already mentioned abstractive summarization or named entity recognition. If you would like to see the tool in action or you are wondering how we can help with your language-related problem, do not hesitate to contact us!