Let’s imagine that you are getting your house furnished. You are surfing the web and you find a stylish, modern armchair that you would definitely want to have in your living room. Unfortunately, there is no information on the webpage where you can purchase one. Not to mention finding a coffee table that would match your dream armchair. This is where the Style Search comes in.

Some time ago one of our Tooploox colleagues wrote a post about building a search engine for interior design, which worked really well with retrieving visually similar images. We wanted our engine not only to find images that share common visual features, but also to be able to find items that represent abstract concepts, such as minimalism or scandinavian design. That is why we decided to extend our Style Search engine based on visual search by adding a text query search.

Dataset

In order to create Style Search engine we needed a dataset that would consist of both: images and text descriptions. IKEA’s website content met our requirements. I scrapped 2193 products’ images from it, along with text description for every product and 298 images of rooms, in which these products appeared. For this purpose I used Python with beautifulsoup and urllib packages, as well as Selenium.

Once I had a dataset, the next thing I needed was a representation of the furniture that would be understandable for a computer. The inspiration came from a state-of-the-art class of models that are used to create word embeddings, namely word2vec.

Word2vec

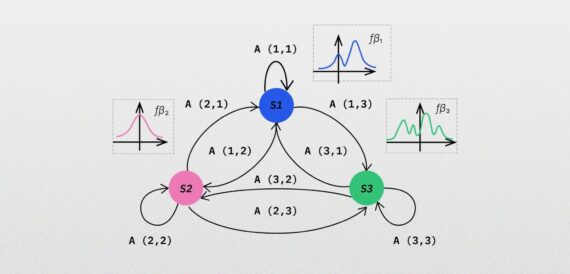

Word2vec basically transforms words into vectors, in such a way, that words sharing similar meaning lay close to each other in the embedding space. There are two main classical models belonging to the word2vec family: continuous bag-of-words (CBOW) and skip-gram model. For our task I have used the first one (CBOW). I will cover all the algorithm details in my next post, right now can read more about it here.

What is really cool about having a vector representation of words is that you can perform arithmetic operations on it to discover subtle dependencies between words. Take a look at some examples:

King– man + woman = queen

France– Paris + Poland = Warsaw

Amazing, isn’t it?

Our approach

In order to produce a vector representation of words we need a set of sentences, that will serve as an input. In a sentence every word appears in specific context (nearby words). The CBOW model gets the context of the word and tries to predict the word that appears in that context.

In our task we looked for a representation of furniture instead of words. We used the descriptions of various household parts, such as living rooms or kitchens, to infer the context for every item (i.e. other items that appeared in the same room). Every item in our dataset was connected to a unique ID. We fed ids of items that appeared in the same context to the algorithm to get the furniture representation. It is worth mentioning that the embedding was trained without relying on any linguistic knowledge since the only information that the model saw during training was whether given items appeared in the same room or not.

In order to optimize hyperparameters for the model we have run a set of experiments and performed cluster analysis of the embedding results. To visualize the embeddings we used t-SNE dimensionality reduction algorithm, introduced by Laurens van der Maaten and Geoffrey Hinton in 2008. This algorithm takes a set of points from a high-dimensional space (in our case that were furniture embedding vectors, each of dimension 25) and finds a faithful representation of these points in a lower dimensional space (typically 2D space) that, broadly speaking, aims at preserving neighborhoods. This means that points that are close to each other in the source space land close to each other in the target space. The points that are originally afar, remain distant after mapping.

For word2vec training and t-SNE embedding I used gensim and scikit-learn Python packages. Below, you can find the visualization of obtained embeddings after dimensionality reduction.

It is easy to see that some items, e.g. ones that appear in bathroom or baby room, are clustered around the same region of space.

LSTM

The main task of textual search was to allow user to ask for furniture representing specific (e.g. minimalism) or characteristic (comfortable, colorful) styles and return matching items.

So, once we had a proper representation of furniture in the vector space, we needed some kind of a mapping between user text queries and furniture. To handle this problem we trained a neural network based on Long Short-Term Memory (LSTM) architecture. Given a user text query, we wanted to predict k-nearest furnitures that match the query. We trained our model to minimize the cosine distance between the predicted item embedding based on its description and the ground-truth furniture embedding. For network training I used Keras Python library, which I highly recommend, especially for a beginner Deep Learning researcher. You can define there even a quite complicated model within only a few lines of code.

Results

What we did next, was the integration of textual and visual search into a Flask-based web application. It allows user to upload an image of a room/furniture and describe it – so that it looks for visually similar furniture, as well as the one matching text description. Afterwards it returns blended results, i.e. furniture that is stylistically similar to user query. In the web app blending is done simply by taking top results, both from visual and textual search engine. We tried some other blending methods, but this is a topic for another blog post.

Summary

The project was a result of my 3-month internship at Tooploox. I have learnt that word2vec is a really powerful tool when it comes to Natural Language Processing. What I have found truly amazing was that given a proper context, the algorithm was able to find a good representation of such an abstract things as furniture, without incorporating any linguistic knowledge. Getting a little more into neural networks and Keras and implementing my first neural network was also very valuable for me. If you would like to create your own word embeddings here you can find a lot of free datasets to play with. For those of you, who would like to dig deeper into neural networks for Natural Language Processing I highly recommend CS224n.

Read also about Augmenting AI image recognition with partial evidence