Safety first! No autonomous vehicle will enter the roads until we know it’s fully reliable. To make the automobiles safe, the automotive industry is developing modern algorithms to analyze not only external but also an internal environment of its products. Monitoring passengers’ behavior (e.g. stress level) may help control systems to detect anomalies and handle emergencies accordingly. We’ve developed an in-car system for autonomous cars that recognizes human emotions in order to spot unexpected facial expressions onboard.

Our solution is based on receiving information from a single camera placed inside the vehicle and handling it through deep CNN modules. What do these CNN models do? The first model detects passengers’ faces, which are aligned using facial landmarks, then cropped and fed to the second deep learning model focused on recognizing emotions. In this article, we’ll walk you through the second part of the process, so if you want to know more about how AI understands humans emotions – keep on reading 🙂

I want you to meet… EmotionalDAN

The architecture we created – EmotionalDAN was inspired by Deep Alignment Network for face alignment. Face alignment is a system that automatically determines the shape of the face components such as eyes and a nose. In other words, such model outputs locations of 68 most important landmarks of the face (such as eye corners, lip corners, eyebrows etc).

Our hypothesis was that by learning to predict facial landmarks, neural network should be better at predicting facial expression. As it has been shown before, multi-task learning might result in improved learning efficiency and accuracy when compared to training the models separately.

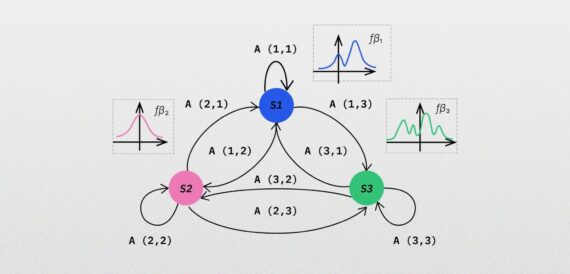

Deep Alignment Network is trained in consecutive stages that allow for refinement of facial landmarks. There is also a transfer of information between stages that keeps track of normalized face input, feature map and landmarks heat map. These features seemed especially beneficial for learning facial expressions.

On top of the last two dense layers in original DAN architecture, we added a new fully-connected layer for emotion branch with a number of neurons corresponding to a number of emotion classes we were trying to predict. Usually in the literature facial expression recognition is done as seven class classification problem – happiness, sadness, anger, surprise, fear, disgust and neutral. On the other hand, we wanted to check how the model performs on an easier but much less ambiguous task – predicting one of three emotion classes – neutral, positive and negative. Hence we experimented with both 7-class and 3-class classification problem.

eNext, we needed to reformulate our loss function. It should account for both things at once – emotion landmarks prediction. We define joint loss:

where the first term is the predicted landmark distance from ground truth normalized by the distance between pupils and the second is cross entropy loss for emotion classification.

Finding a huge dataset for training that contains both emotion and landmark labels was easier than I thought. I was lucky to stumble upon recent(2017) AffectNet database, which contains over 1M face images that were collected from the Internet by querying three major search engines using 1250 emotion-related keywords in six different languages.

Where is AI looking at?

Image 3. Highlighted face regions that EmotionalDAN looks at to predict emotion

Apart from knowing the accuracy of your model, it is even more exciting to get a grasp of how the model is learning or how a decision is made. To gain some interpretability from our model, we applied a popular technique called GradCAM, which provides visual explanations from deep networks via gradient-based localization.

What’s really interesting is that even though we did not feed any emotion-related spatial information to the network, the model is capable of learning on itself which face regions should be looked at when trying to understand facial expressions. We humans intuitively look at person’s eyes and mouth to notice smile or sadness but neural network only sees a matrix of pixels.

Looking at GradCAM activations, it appears that model got it that eyes and mouth are the most important indicators of expressed emotion. Other regions that were often activated include forehead (surprise, fear) and nose(disgust).

1. Disgust: 0.69 Anger: 0.3 Neutral: 0.04

2. Surprise: 0.9 Fear: 0.02 Anger: 0.02

3. Happiness: 0.999

4. Happiness: 0.98 Neutral: 0.01

5. Anger: 0.76 Sadness: 0.22

6. Surprise: 0.98 Neutral: 0.01

7. Anger: 0.73 Neutral: 0.09 Sadness: 0.01

Show me your face, EmotionalDAN

Another interesting thing to do was to check how those activated regions vary per category. To do that, I took face images from the test set, calculated Grad-CAM activations on them, grouped by emotion label and calculated their averages.

Even though mean maps don’t look that much different between labels, which is not surprising given that we only check which face regions are activated – not how they are activated, there is something emotional about them. For example, I love how mean activations for disgust look really disgusted and unhappy.

Want more?

If you are interested in numerical results on how our model compares against benchmarks (spoiler alert: it kicks ass ! ), I recommend adding our paper from this year’s CVPR workshops to your reading list. For further reading, there is also an extended version on arxiv (currently under review for publication).

For those who are into fewer words and more code, there is also a GitHub repo with EmotionalDAN implementation in Tensorflow. Enjoy!

Wanna discuss any AI-related topic with us? Let’s talk! 🙂

Read also about Augmenting AI image recognition with partial evidence