Introduction

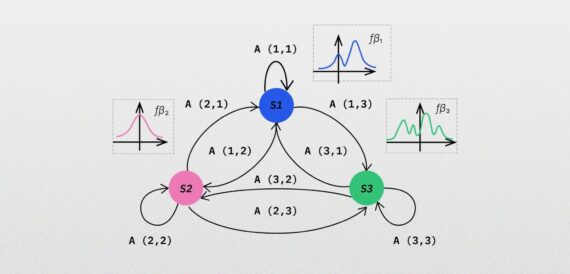

Lately, I’ve been working on inspecting a problem of popularity prediction of videos promoted on Facebook. Popularity was defined/determined here as a number of views compared to number of all other views of videos from a given time frame. In that process, the videos that were already somewhat popular were promoted, i.e. “boosted” in Facebook’s parlance. In the next step, I measured how that action affected popularity a day later and it turned out that in many cases the popularity decreased (see the Figure below). This seemed strange. It turned out that the main reason for that effect was a peculiar statistical phenomenon called regression to the mean (RTM).

Figure: Distribution of increase in popularity of the most popular videos (control group) after a given number of hours since publication. The X axis corresponds to the number of hours since publication while the Y to percentile increase in the number of views compared to views of all other videos in a given time frame. Individual box plots correspond to distribution of values for consecutive hours since publication. One can see that for the recently published videos the popularity decrease is larger than for the older videos, i.e. the RTM effect is more clearly visible for the recent videos.

Historical background

RTM was first described in the 19th century by Sir Francis Galton, the inventor of statistical correlation. To illustrate this phenomenon Galton pointed out that very tall parents have shorter children while very short parents have taller children [1][4]. The phenomenon seemed so unintuitive and strange that he needed help of the best minds of a 19th century Britain to make sense of it [2]. Even nowadays the effect is so difficult to communicate and comprehend that many statistics teachers dread the classes in which the topic comes up and their students often leave with only a vague understanding of the concept [2]. Nevertheless, I’ll try to shed some light on it.

Explanation

RTM is a statistical phenomenon that can be observed when extreme measurements of a random variable tend to be followed by measurements closer to the mean.

A nice explanation of this phenomenon is given in Wikipedia [4]. Let’s consider a group of students that takes a 100-question test where the answer to each question is either “yes” or “no”. If the students choose the answers completely randomly, one can expect the mean result to be around 50 questions answered correctly. However, some of the students would get results much worse and some of them much better, simply by chance. Now, let’s select the 10% of the students that obtained the best results. Their mean would be above 50. If we give the selected students a similar test to solve one more time, the expected mean result will be again 50, i.e. it will seem to “regress” to the mean value of the whole population. Now, if the result of the test was produced completely deterministically, the expected result in the second trial for the selected group would be the same as in the first one, i.e. no “regression” would be observed. The phenomenons that we examine in practice are usually a mix of random and deterministic effects and thus the magnitude of the RTM effect is somewhere between these two extremes.

In the example above, the effect occurred on group level; however, it also occurs on an individual level, i.e. when doing consecutive measurements related to an individual [1]. In the context of the example, this might mean that for a given student the knowledge of the topic being tested is on a level of, say, 70%. If his score in the first test is 80% (he was lucky), the second one will likely be closer to 70%.

Examples

Let’s take some other examples of situations where the RTM effect occurs:

- Selecting the brightest children using some test, supplying them with educational materials and then testing their performance again some time later [4].

- Testing a positive effect of a drug some time after starting the treatment [1].

- Predicting if a successful sports team or an athlete will be successful during the next game [2], [4].

- Predicting whether the stores with the largest sales are going to maintain their sales level in the next year [2].

- Assessing whether high performance of a flight cadet during an acrobatic maneuver is going to be maintained during the next maneuver [2].

How to deal with it

How to account for this effect in practice?

- The best solution is to use an appropriate experimental design, i.e to have a control group in your experiment and account for RTM effect by comparing the result in the treatment group with the control group. The RTM effect can be estimated using linear regression method called ANCOVA (see a good description of this approach in [3]).

- Calculate the effect explicitly [1, eq.1]. Note that in practice the additional information about the population required by this method is often not available so using it is often not possible.

- Use multiple measurements to reduce variability [1, eq. 2].

Conclusions

Conclusions [1]:

- RTM is a ubiquitous phenomenon occurring in repeated measurements and should always be considered as a possible cause of an observed change.

- Its effect can be alleviated through better experimental design and use of appropriate statistical methods.

Sources

[1] Adrian G Barnett, Jolieke C van der Pols, Annette J Dobson: “Regression to the mean: what it is and how to deal with it”, International Journal of Epidemiology, 2005 with erratum

[2] Daniel Kahneman: “Thinking, fast and slow”, chapters 17 “Regression to the mean” and 18 “Taming intuitive predictions”, 2013

[4] https://en.wikipedia.org/wiki/Regression_toward_the_mean, acessed on 2018-01

Read also about Augmenting AI image recognition with partial evidence